When Minneapolis police officer Kimberly Potter fired her service revolver instead of her taser on April 11 killing Danute Wright, she was not the first to say this fatal action was a mistake. Sixteen police officers have done something similar according to the website Fatalencounters.org. The weapons have a similar feel and both are worn in holsters on an officer’s belt. They both fire with finger pressure on a trigger.

That this weapon confusion could happen repeatedly over the course of decades has many in law enforcement calling for better training and urging officers to be more careful. But there’s a more thoughtful and effective solution, one that springs from commercial aviation where pilots and other workers make blunders, lapses, oversights and omissions and still contribute to the safest form of transportation.

In June 1970, an Air Canada DC-8 jetliner was just about to land at the airport in Toronto when the pilot deployed the airbrakes, destroying lift and sending the plane slamming to the ground killing 109 people on board.

That the pilot made a mistake was never in doubt; even he knew it.

“Sorry, Oh! Sorry, Pete.”, the first officer said to the captain as the plane stalled.

Humans will make mistakes. This is a basic tenet in aviation safety, an industry that leads most others in its focus on human performance. What aviation human factors experts also know is that telling people not to err does not work.

Bodycam footage suggests officer Potter thought she was firing her taser, leading to the call for better and more frequent training in the use of these weapons. In aviation, tasers and handguns would likely be considered part of the same system and training would be combined and offered on a regular schedule.

And while boosting training is a good way to start, removing the source of confusion is even better. That was learned the hard way in the case of the DC-8 airbrake design because after the Air Canada crash, three others quickly followed.

In a summary of the accidents conducted by Congress in 1974, one member noted (with original emphasis included,) the similarity between those accidents and the police killing of Wright 47-years later.

“It was not the deliberate deployment that was the problem but the inadvertent action that endangered safety.”

After the Air Canada crash in 1970, the Federal Aviation Administration and Douglas Aircraft, the plane’s manufacturer thought that warning pilots about the spoilers was sufficient. And so, operators of the 500 DC-8s around the world were reminded that pilots should not extend the spoilers in flight. A placard was installed by the spoiler handle reading “Deployment in Flight Prohibited.”

In Canada, however, an aviation official called the placard “ridiculous” according to a transcript of the congressional review. “The wording might just as well have read, ‘Do not crash this plane,’” the unidentified Canadian said.

More than 100 travelers were dead in the Air Canada crash, but fixing the design that confused the pilots was not part of the solution.

Over the next two years British and Japanese pilots made the same mistake with disastrous consequences. Then, in June 1973, a Loftleidir Icelandic DC-8 landing at New York’s John F. Kennedy Airport slammed into the ground following a 46-second discussion on the flight deck about the proper way to arm the spoilers. Despite that, the co-pilot inadvertently engaged them.

“No! No! No! No!” the flight engineer shouted. The resulting crash injured 38 of the 128 people on board.

Investigators, now for the fourth time, concluded the pilots had erred. But on this occasion, they dug deeper noting the lack of recovery time when mistakes are made so close to the ground.

Police officers aren’t pilots but both professions rely on people making critical decisions with plenty of pressure and very little time.

Police officers aren’t pilots but both professions rely on people making critical decisions with plenty of pressure and very little time.

“When a person is intently paying attention to what they perceive as a threat, it is expected that they will not perceive the other stimulus around them,” writes Lewis Von Kliem, a criminal justice consultant at Force Science Institute.

“That includes factors that we would expect someone to notice under calmer circumstances—factors like the weight, shape, and color of a taser as compared to a full-size firearm.”

Von Kleim and many other human factors specialists know that a design that adds to the mental burden is problematic.

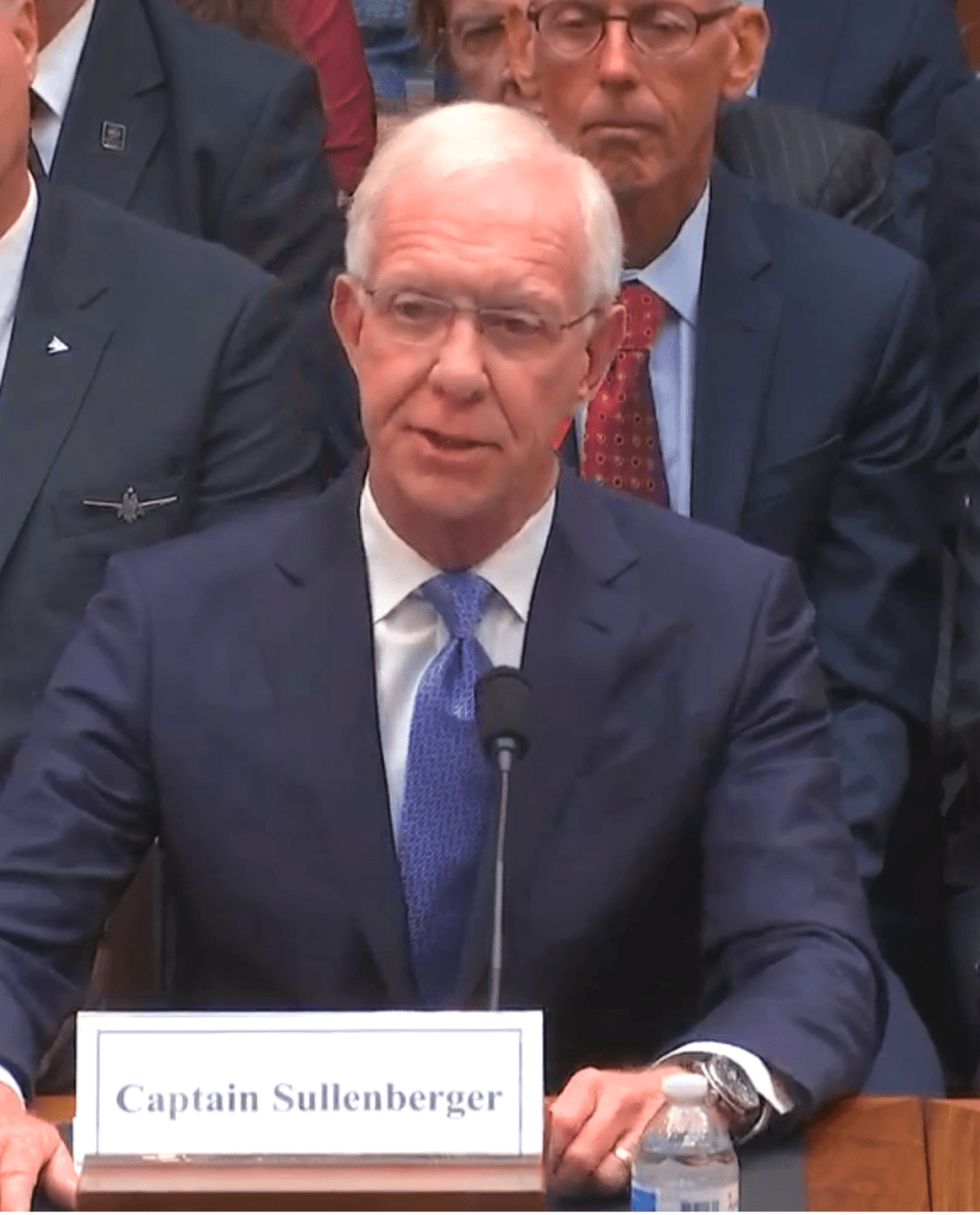

Forty-five years after Congress analyzed whether the DC-8 accidents could have been avoided, Capt. Chesley (Sully) Sullenberger testified before another congressional hearing looking into the crashes of two Boeing 737 Max airliners that killed 346 people in 2018 and 2019. Once again it was a case of design confusing humans.

“We must make accurate assumptions about what is possible in extreme emergencies, given the distractions, the workload, the task saturation,” Sullenberger said. “We shouldn’t expect pilots to have to compensate for flawed designs.”

Nor should we expect that of police officers. Aviation provides a guide. Tasers should be clearly and abundantly distinctive from their lethal counterparts. Mistakes are inevitable but learning from them is a choice.

Author of The New York Times bestseller, The Crash Detectives, I am also a journalist, public speaker and broadcaster specializing in aviation and travel.

Excellent comparison. Food for thought in this recent tragic event. Many things to be learned.

It is more than regrettable that human factors is mostly confined to aviation. As the title ‘Human Factors’ suggests, it applies to all industries and professions and entities which employ humans.

If a choice for which first, the answer is medicine.

Well remember the Air Canada crash as a 15-yr-old aerogeek in Toronto. That crew also had discussed possible advantages of deploying spoilers Just before touchdown….

Piston aircraft have long had different-shaped nobs on the controls for throttle, mixture & prop-pitch.

It sounds incredibly dumb to give cops a lethal weapon feeing so similar to a non-lethal one.